Open Source, Closed Source, and the Part Nobody Puts in the Press Release

Cal.com went closed source. Warp went open source. The real question is who can inspect, secure, change, and leave the tools your business depends on.

If you are not a software person, the open source vs. closed source debate can sound like developer religion.

It is really a business trust question.

When a tool handles your calendar, customer data, internal workflows, code, finances, or AI agents, you are not just choosing features. You are choosing who gets to see how the system works, who can fix it, who can change the rules, and what happens if the company behind it changes direction.

The short version

Cal.com went closed source because AI makes vulnerability discovery cheaper.

Warp went open source because AI agents make community development cheaper.

Anthropic is holding back Claude Mythos Preview because the security risk is real.

OpenAI's structure changes show how messy these decisions get once governance, data, contracts, and customer trust are involved.

My take:

Open source is not magic.

Closed source is not evil.

AI did not settle the debate.

It made the trust architecture harder to ignore.

If that is enough, you have the point.

If you want the fuller version, here is why I think this matters for anyone building a business on top of tools they do not fully control.

Trust architecture is not just code. It is contracts, access, data, operations, and the systems underneath the product.

The reason this is worth unpacking is that two companies just made opposite bets and both pointed at the same technology.

Cal.com went closed source.

Warp went open source.

Same AI moment. Opposite conclusion.

Quick level set: what open source actually means

Open source means the source code is public. People can inspect it, learn from it, suggest changes, fork it, and sometimes run it themselves.

It does not always mean free, safe, or easy.

Closed source means the company keeps the code private. You can use the product, but you cannot see exactly how it works. You are trusting the company, its security team, its incentives, its contracts, and its future decisions.

That does not automatically mean unsafe. Plenty of closed source software is excellent. Plenty of open source software is a mess.

The difference is control.

With open source, more people can inspect the system. More people can contribute fixes. If the company disappears or changes terms, the community may still have the code.

With closed source, the company can move faster, protect more of its business logic, reduce some attack surface, and control the whole experience.

The AI part makes the tradeoff sharper.

The spectrum most people already understand

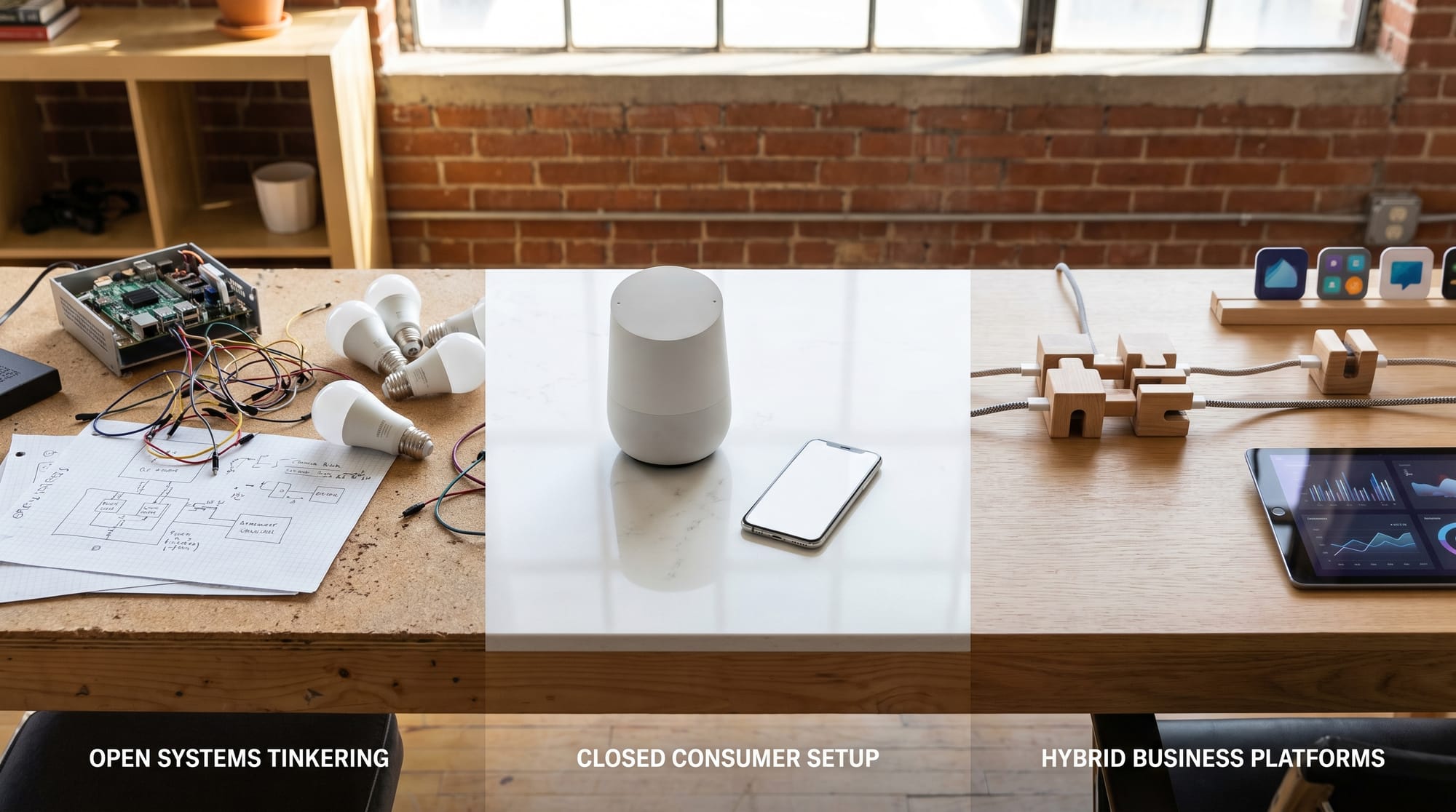

The easiest way to think about this is as a spectrum.

Open systems, closed systems, and the middle ground where most business platforms actually live.

At one end, you have open systems.

Home Assistant is my favorite everyday example. It is open source home automation built around local control and privacy. You can run it yourself. You can inspect it. You can connect thousands of devices. If a manufacturer does not support something you need, there is a decent chance someone in the community has already built an integration.

That is the open source promise:

You are not waiting for one company to care about your edge case.

WordPress is the larger business example. It powers more than 43 percent of the web, and the reason it survived this long is not because the core editor is perfect. It survived because the ecosystem is enormous. Themes, plugins, hosting companies, agencies, developers, tutorials, contributors, and businesses all orbit the same open foundation.

Open source creates weird gravity when it works.

At the other end, you have closed systems.

Google Home, Apple Home, iOS, Notion, Slack, Calendly, QuickBooks, and most SaaS tools people use every day are closed source products. You cannot inspect the server code. You cannot fork them. You cannot keep the product alive if the company kills the feature you depend on.

But the tradeoff is obvious:

They are polished, supported, and easier to start using. They reduce choices, which is sometimes exactly what you want.

My wife uses Google Home because it mostly just works. I use Home Assistant because I want control. Neither of us is morally superior. We just have different tolerance for tinkering.

Then there is the middle.

This is where a lot of business software lives.

Airtable is closed source, but it gives you APIs, scripting, extensions, automations, interfaces, and a marketplace around the product. You cannot fork Airtable. But you can build a lot on top of Airtable.

Salesforce is the enterprise version of that idea. The core platform is closed, but the AppExchange ecosystem lets customers extend Salesforce with apps, components, consultants, and industry specific solutions.

That hybrid model matters. It is not ownership in the open source sense. It is not a sealed box either. It is a closed platform with controlled openness around the edges.

That is where tools like Airtable Hyperagent get interesting to me. Hyperagent is not open source like OpenClaw. But it sits on top of a platform designed for structured data, integrations, and controlled extensibility. For a business, that may be the more practical version of openness.

So when I talk about OpenClaw versus tools like Hyperagent, this is the distinction I am really making:

OpenClaw is closer to Home Assistant: powerful, flexible, inspectable, community driven, and more work.

Hyperagent is closer to Airtable or Salesforce: more controlled, more polished, less inspectable, and easier to govern.

Both can be the right answer.

The mistake is pretending they solve the same trust problem.

What Cal.com did

Cal.com has been one of my favorite scheduling tools for a while. I use it, recommend it, and prefer it over Calendly.

It was also a commercial open source company.

That meant the public could see and contribute to the code, while Cal.com built a business around hosting, support, enterprise features, and a polished product.

On April 14, Cal.com announced it was moving its production codebase closed source after five years in public. Their stated reason was AI security.

The short version of their argument:

AI can scan open codebases for vulnerabilities faster than humans can patch them.

If the blueprint is public, attackers move faster.

Cal.com is keeping an open source version alive as Cal.diy, but the production product and many commercial features are moving behind the wall.

I understand the concern. Calendar software touches sensitive data: customer names, meeting links, availability, integrations with Google Calendar, Zoom, Stripe, CRMs, internal workflows, and sometimes sales processes. If that system gets compromised, it is not theoretical.

But I also think "AI made us do it" is too vague to be the whole explanation.

Security is one reason a company might close source. Business model pressure is another. Investor expectations, competitive pressure, and community overhead all matter too.

Maintaining a public repo is work. Issues, pull requests, roadmap debates, license questions, documentation, support, and people arguing with you on the internet.

All valid reasons.

Very different reasons.

What Warp did

Then Warp did the opposite.

Warp is the terminal I use. If you are not technical, a terminal is the text based command center developers use to control their computer, run code, manage servers, and automate work.

It is not a casual consumer app. It is a power tool.

On April 28, Warp announced that its client codebase is now open source. The repo is public. Most of the code is AGPL v3. Its UI framework crates are MIT licensed.

Warp's argument is almost the mirror image of Cal.com's.

They are saying AI agents make open source easier to maintain.

The community can report issues, argue about direction, test behavior, and review decisions. Agents can help with specs, pull requests, tests, and reviews.

In other words:

Cal.com says AI makes public code harder to defend.

Warp says AI makes public code easier to improve.

Both can be true.

That is why this is interesting.

The security side is real

I do not want to wave away the security argument.

Anthropic recently announced Project Glasswing, an initiative built around Claude Mythos Preview.

Mythos Preview is not generally available. Anthropic says it can find and exploit serious vulnerabilities at a level that changes the cybersecurity risk equation. They are giving controlled access to selected infrastructure companies, security teams, and open source security organizations so defenders get a head start.

That supports Cal.com's fear.

If AI can find vulnerabilities that humans missed for years, open source maintainers are going to feel exposed.

But it also supports Warp's bet.

If AI can find vulnerabilities, defenders can use it too. Open source maintainers, security researchers, and infrastructure companies can use those same capabilities to harden systems faster than before.

The question is not whether AI creates risk. It does.

The question is whether the answer is hiding more code, adding more defenders, or changing how we secure software altogether.

The model training part gets weird fast

There is another layer here that is genuinely wild to think about: models are learning from other models.

OpenAI has a legitimate product called Model Distillation. The idea is simple: use outputs from a stronger, more expensive model to train a smaller, cheaper model for a specific task.

That is a real technique. It can be useful. It is not automatically shady.

It gets messy when consent and competition enter the picture.

Anthropic has alleged that DeepSeek, Moonshot, and MiniMax ran large-scale distillation campaigns against Claude, using about 24,000 fraudulent accounts and over 16 million exchanges to extract capabilities from Claude models. Anthropic says this violated its terms and access restrictions.

OpenAI has also raised concerns about DeepSeek distilling capabilities from its models.

Important caveat: these are allegations from the companies involved. I am not saying every Chinese open source model is doing this, and I am definitely not saying distillation itself is wrong.

But the recursive loop is real. Closed frontier models train smaller models. Smaller models become open releases. Other frontier models learn from public data, synthetic data, user behavior, code, benchmarks, and outputs from the broader ecosystem.

Everyone is standing on everyone. Some of it is licensed. Some of it is public. Some of it is contested. Some of it is probably going to end up in court.

So when someone says "open source" or "closed source" in AI, ask a second question:

Open or closed at which layer?

The app?

The client?

The server?

The model weights?

The training data?

The synthetic data?

The evals?

The agent tools?

The company governance?

Those are all different layers.

OpenAI is the cleanest example of how messy structure gets

OpenAI started as a nonprofit in 2015.

Structure changes look simple from the outside. Inside, someone still has to protect the data, permissions, contracts, and trust relationships.

In 2019, it created a for-profit subsidiary under the nonprofit.

In 2025, OpenAI announced a public benefit corporation structure, with the nonprofit still in control.

That is the simple public version. The inside version is never simple.

I have helped clients protect data and access during entity structure changes. Not at OpenAI scale, obviously, but the operational pattern is familiar.

The legal change is only one layer.

You also have contracts. Permissions. Customer data. Vendor access. Security boundaries. Employee comms. Banking. Insurance. Client trust. Systems that were built around one company structure and now have to keep working while the structure changes.

From the outside, these changes get compressed into a press release:

"We are evolving our structure."

"We are moving closed source."

"We are becoming a public benefit corporation."

"We are open sourcing the client."

Inside the company, it is usually hectic and full of people trying to keep the machine running while the org chart moves underneath them.

That is why I am hesitant to dunk on Cal.com.

I do not know the internal version.

But I am also hesitant to accept "AI security" as the full explanation.

Discourse had the best counterpoint

Discourse published the best counterargument I saw.

Their point was simple: attackers do not need your GitHub repo to study a SaaS app.

They can inspect browser code, mobile apps, APIs, network calls, and product behavior.

Closing source hides some implementation details.

It also locks out a lot of defenders.

That feels right to me.

Closed source can reduce some risk.

It can also create a false sense of security.

Open source can expose code. It can also create more trust, more scrutiny, more portability, and more people able to help.

The answer depends on what the company is actually protecting.

If your public repo is mostly your product moat sitting in GitHub, AI makes open source uncomfortable.

If your public repo has a real community, fast review, strong tests, and maintainers who can use AI defensively, AI might make it stronger.

What this means if you are not a developer

You do not need to have a strong opinion on software licenses to care about this.

You just need to care about the tools your business depends on.

Before you build your workflow around a product, ask a few boring questions.

Can I export my data?

Can I leave without rebuilding my entire business?

Who can inspect the system?

Who can patch it?

What happens if the company changes its pricing, license, or ownership structure?

What happens if the company gets acquired?

What happens if the open source project gets abandoned?

What data does the AI system see?

Is my data used for training?

Which parts are open, and which parts are actually controlled by the company?

Those questions matter more than the label.

Open source is not magic.

Closed source is not evil.

AI did not settle the debate.

It made the trust architecture more visible.

That is the phrase I keep coming back to: trust architecture.

Not just the code. The whole system of technical, legal, operational, and social trust around a product.

Who can see it.

Who can fix it.

Who can fork it.

Who can profit from it.

Who can leave.

Who gets protected when things go wrong.

That is the decision underneath the press release.

My current take

I still like Cal.com. I still like Warp. I still use plenty of closed source tools.

I still prefer open source when I am building something I may need to understand, modify, or keep alive if the company changes direction.

The point is not to pick a religion.

The point is to know what kind of trust you are buying.

If a company closes source because it needs to protect customer data, say that. If it closes source because the business model got awkward, say that too.

If it opens source because the community is the product advantage, say that. If it opens source because agents now make community development cheaper, that is genuinely interesting.

But "AI made us do it" is not enough.

AI is the pressure. The decision is still human.

Hit reply: what is one tool your business depends on that you would be nervous to lose overnight?

Share & Subscribe

Know someone building a business on tools they do not fully control? Forward this to them. The point is not to scare them off their stack. It is to help them ask better questions before the stack becomes load-bearing.

This is Automate With Rob, a newsletter about building with AI tools without losing your mind. If someone forwarded this to you, subscribe here.

Related: the broader 2026 Operator's Glossary covers MCP, SaaS, LLM economics, and the 20 companies shaping these trust decisions.